Students create 3-D reconstruction of Big Shot

Chester F. Carlson Center for Imaging Science creates companion image

Carl Salvaggio, David Nilosek and Katie Salvaggio, Center for Imaging Science

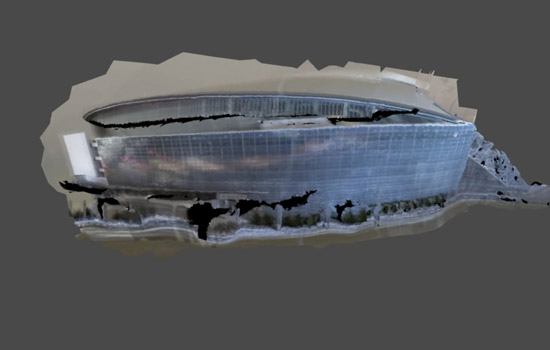

A three-dimensional reconstruction of the Big Shot taken of Cowboys Stadium in Arlington, Texas.

The Big Shot Wrap-up Party on May 1 celebrated the success of the Rochester Institute of Technology’s 28th community photo project and the logistical feat of illuminating Cowboys Stadium in Arlington, Texas, on March 23, primarily with flashlights and camera flashes.

Carl Salvaggio, professor in the Chester F. Carlson Center for Imaging Science, and his doctoral students brought a new dimension to this year’s Big Shot that they hope to extend to next year's project. Salvaggio and imaging science graduate students David Nilosek and Katie Salvaggio (no relation) produced a three-dimensional reconstruction of the Cowboys Stadium.

The reconstruction was a way for the Center for Imaging Science to participate in the RIT community tradition and to test an image processing technique called “structure from motion” under different conditions.

The technique is common to the field of computer vision and is applied, in this case, to teach computers to make choices when scanning an image. Algorithms match pixels between two photographs and build depth from otherwise flat images. The more connections made between pixels, the more robust the three-dimensional reconstruction.

“As you find things at different distances away from your camera, the distance that pixel moves from one frame to another can be translated back to how far away that object is from the camera itself,” Carl Salvaggio says. “That’s how you get depth perception.”

Nilosek and Katie Salvaggio are alumni of the undergraduate imaging science program. Nilosek will receive his Ph.D. this year, and Katie Salvaggio, is finishing her third year. Their respective research uses powerful computers in the Center for Imaging Science to process hundreds of aerial images of buildings shot in daylight. Nilosek’s research reconstructs structures and models their material components; Katie Salvaggio’s work focuses on developing an accuracy measurement.

They encountered a different set of challenges in collecting data and reconstructing the Big Shot image of Cowboys Stadium. The exercise tested the algorithm’s ability to process nighttime photography shot on the ground from only 48 different views.

The Big Shot team—led by RIT professors and coordinators Michael Peres, Dawn and Bill Dubois, and Willie Osterman from the School of Photographic Arts and Sciences—took an extended exposure of the stadium from a construction lift 40 feet in the air, while the imaging scientists orchestrated multiple shots on the ground. They arranged an ensemble of photography students outfitted with 12 donated Nikon cameras and Manfrotto tripods in various locations in the parking lot to take sets of simultaneous shots.

The imaging science team had a hunch that the stadium’s glass shell would be problematic for the image processing algorithms. Their previous attempt to shoot Student Innovation Hall at night “failed miserably,” Katie Salvaggio says, because the expanse of glass fooled the algorithms searching for matching pixels in the data. The trio took a gamble and flew to Texas two-and-a-half days ahead of the event to determine where to position the cameras.

“It was basically a lot of trial and error,” Nilosek says. “We had to try all the configurations we could think of and pick what gave us the best looking results.”

They considered different parameters—the distance between the cameras, the distance from the camera to the object, the number of positions of the camera, the physical position of the camera relative to the building, where the camera was pointed and how the stadium was framed.

“We took pictures, downloaded them and started processing them in the parking lot,” Carl Salvaggio says. “When that didn’t work, we took more pictures, downloaded them and processed them again.”

The controlled lighting during the Big Shot illuminated the parking lot, so the light would reflect off the stadium.

“They illuminated the building straight up the side,” Carl Salvaggio adds. “They lit parts that we couldn’t see during the day. I think it turned out that we had more features to match at night than we did during the day, which is opposite of what we thought would have happened.”

They downloaded PhotoScan, a Russian-produced commercialized software, to knit together the images of the stadium on Carl Salvaggio’s laptop computer. The data had to be color corrected before being processed using the structure for motion software. Otherwise, the algorithms, which rely on color to find matches, would pass over pixels of the same object if shaded differently.

“We had 48 photos and one amazing biomed photo student—Ryan Harriman, the CIAS college delegate,” Nilosek says. “We were all sitting in the Cowboy press room, and he color-balanced all the photos in about 10 minutes to get them ready to go.”

The imaging science team waited 90 minutes for the software to process the images. Back at RIT, Nilosek created a video of the reconstruction, showing the image from different angles.

This year’s Big Shot gave the Center for Imaging Science a chance to collaborate with the School of Photographic Arts and Sciences and to become part of an RIT tradition.

“We were able to walk into a group that had been doing this for a long time and they were supportive of us getting involved and excited about us trying something new,” Carl Salvaggio says. “We would love to be part of the Big Shot in the future.”