Teaching computers to learn

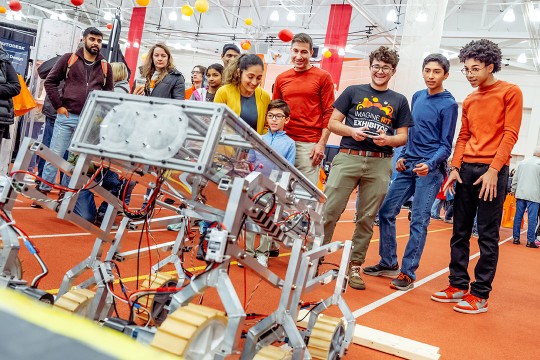

A. Sue Weisler

Christopher Kanan, right, assistant professor of imaging science, is teaching computers how to do more than one task at a time.

Using a machine learning technique called deep learning, computing systems now rival humans at many tasks, including image and speech recognition.

“This relies on giving a computer large amounts of data to learn from,” said Christopher Kanan, associate director of the Center for Human-Aware Artificial Intelligence and co-lead of the machine learning and perception pillar.

While the technology has rapidly progressed, Kanan and his group are trying to make deep learning even more versatile.

“We humans can intuit and know things about our own memories. We learn over time, but that complexity may be a long way off for computers,” he said.

Deep learning systems can classify images, recognize faces and convert speech-to-text as well as people. Each system has its specialty, but unlike humans, they cannot do more than one task.

To address this gap, Kanan’s research team is building systems capable of arbitrary image understanding tasks.

For example, a user can upload a photo and then ask questions about it. An early example of this technology can help visually-impaired users get information about images on the web or ask questions about objects in their homes by taking photos of them.

Kanan’s group is also trying to make systems understand their own limitations.

“The one thing systems don’t do is respond, ‘I don’t know.’ There is always an output or result. We want to make systems that know what they don’t know, can learn after being deployed and can do multiple tasks.

“People think AI already has these abilities, but there is a very long way to go before machines match human levels,” said the assistant professor of imaging science. “How can we make a system that learns immediately and can update its beliefs about the world based on new information? If you want to head toward useful, human-like intelligence, this is the prerequisite for it.”