DEAR

Presentation

Abstract

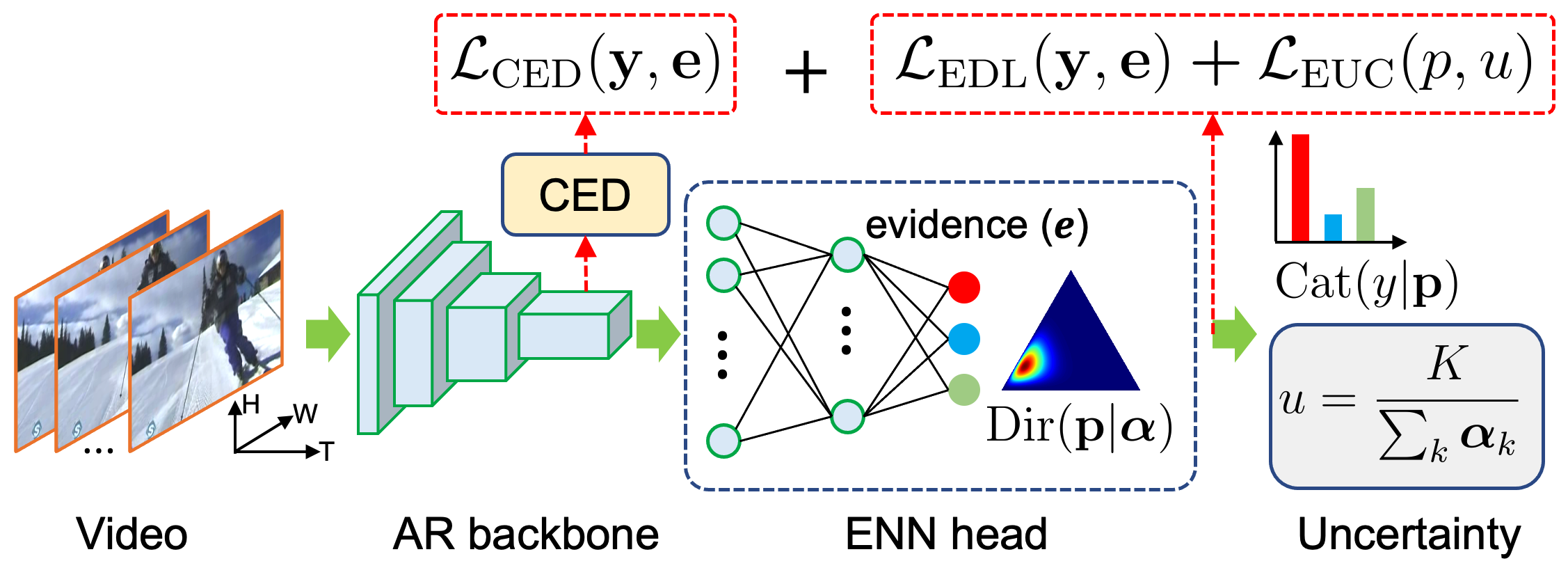

In a real-world scenario, human actions are typically out of the distribution from training data, which requires a model to both recognize the known actions and reject the unknown. Different from image data, video actions are more challenging to be recognized in an open-set setting due to the uncertain temporal dynamics and static bias of human actions. In this paper, we propose a Deep Evidential Action Recognition (DEAR) method to recognize actions in an open testing set. Specifically, we formulate the action recognition problem from the evidential deep learning (EDL) perspective and propose a novel model calibration method to regularize the EDL training. Besides, to mitigate the static bias of video representation, we propose a plug-and-play module to debias the learned representation through contrastive learning. Experimental results show that our DEAR method achieves consistent performance gain on multiple mainstream action recognition models and benchmarks.

Result Summary

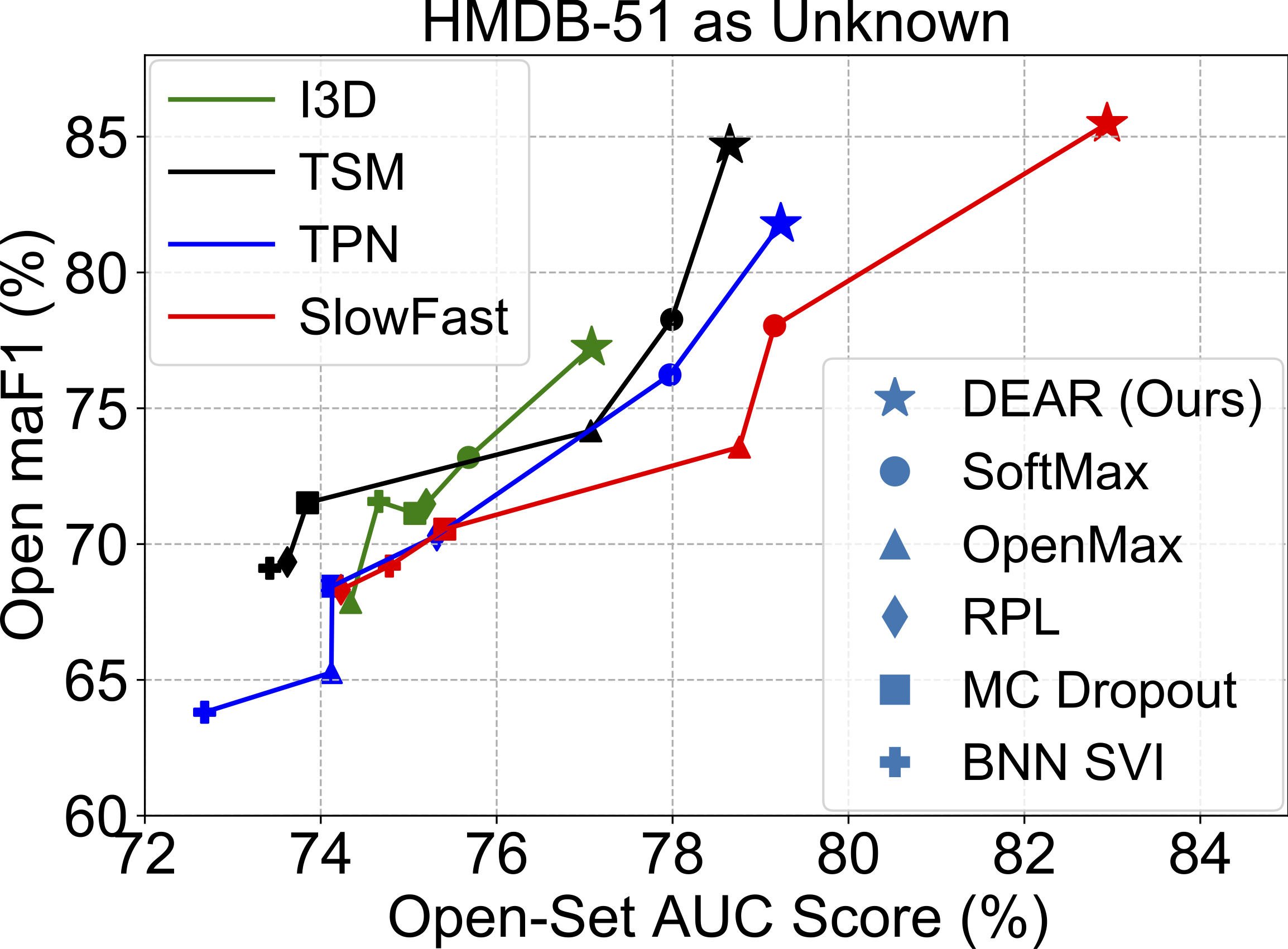

Open Set Action Recognition:

|

|

|

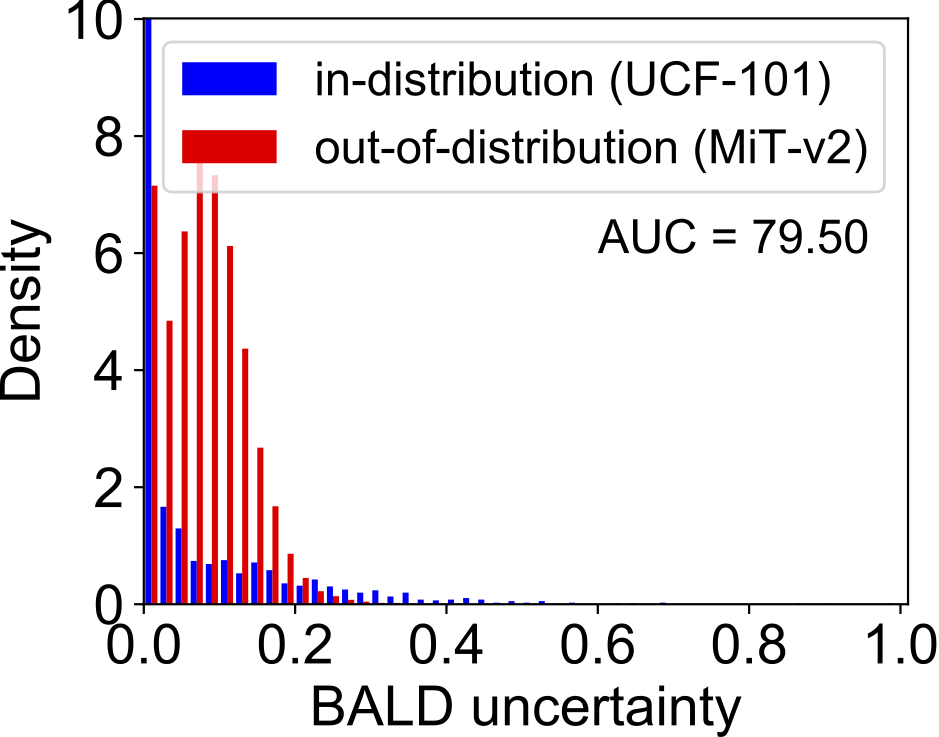

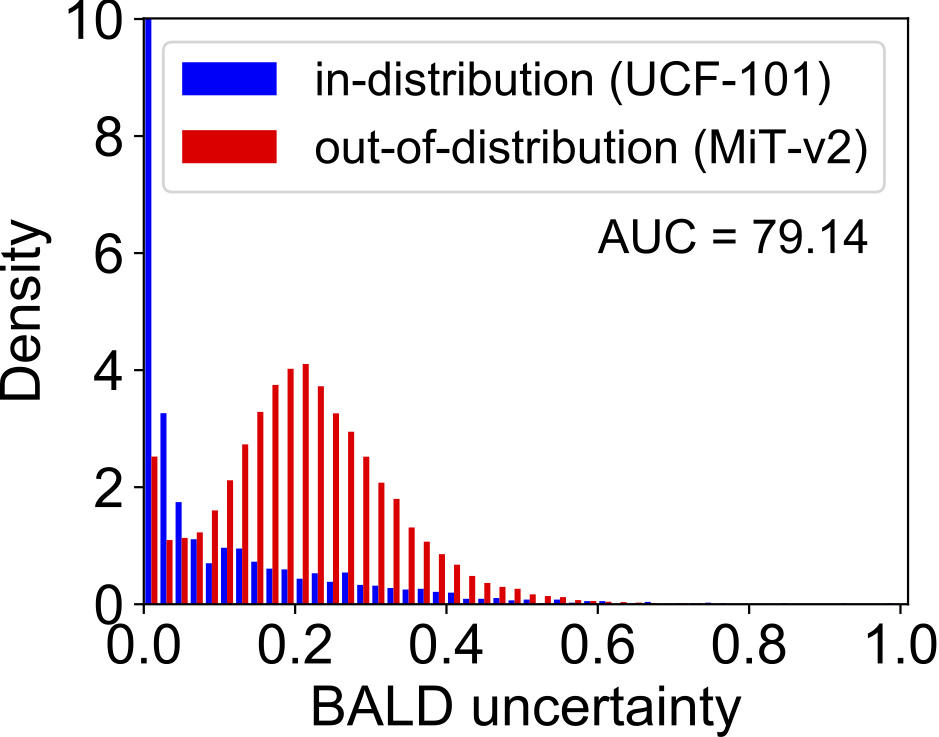

Out-of-Distribution Detection:

|

|

|

|

Citation

|

If you find our work helpful to your research, please cite: |

|

@inproceedings{BaoICCV2021DEAR, |