Projects

Experiences

Collaborative Composite Image

The Collaborative Composite Image course is an interdisciplinary class at the Rochester Institute of Technology that brings together students from our Photograph and 3D Digital Graphics programs. The project includes an augmented reality assignment using mobile devices with a course app powered by Aurasma to view student animations of ten paintings in the permanent collection of the Memorial Art Gallery.

David Halbstein, Susan Lakin

Watch the video

Digital Docents: Historical NY Stories in Virtual and Augmented Reality

Project involving students and faculty in liberal arts, art and design, and computer science at RIT working in partnership to deliver humanities-rich content in the form of a digital docent, modeled after a resident of western New York in the 19th century, who will guide visitors online and onsite at Genesee Country Village & Museum, the third-largest living history museum in the US and the largest in NY state. The format is storytelling as a historical device whereby humanities content is presented conversationally, with pauses for question and answer, through the use of augmented reality, wherein technology and devices are used to superimpose digital assets over real elements in physical spaces, as well as constructed environments in virtual reality as a means of demonstrating enhanced storytelling capabilities. The interactive nature of the storytelling enables visitors to reflect upon the content and context of the narrative. We propose to develop five narratives to engage visitors in the historical content and context of Western New York via the three methods of access: an online virtual world; augmented reality (AR) enhancements to be used online or at the museum using one’s own device; and AR enhancements viewable onsite with the use of a museum provided AR device.

Juilee Decker, Amanda Doherty, Joe Geigel, Gary D. Jacobs

Mixed Reality Theatre

One goal of live theatre is to transport the audience, via sets, props, lighting, and effects, to another place to experience the live telling of a story. In this work, we address the idea of Mixed Reality Theatre: live performance needing no physical sets or props on the physical stage. Instead stage elements and effects are completely digital and presented through augmented reality (AR) devices. This work was recently funded by an Epic Games MegaGrant.

Joe Geigel, David Munnell, Marla Schweppe

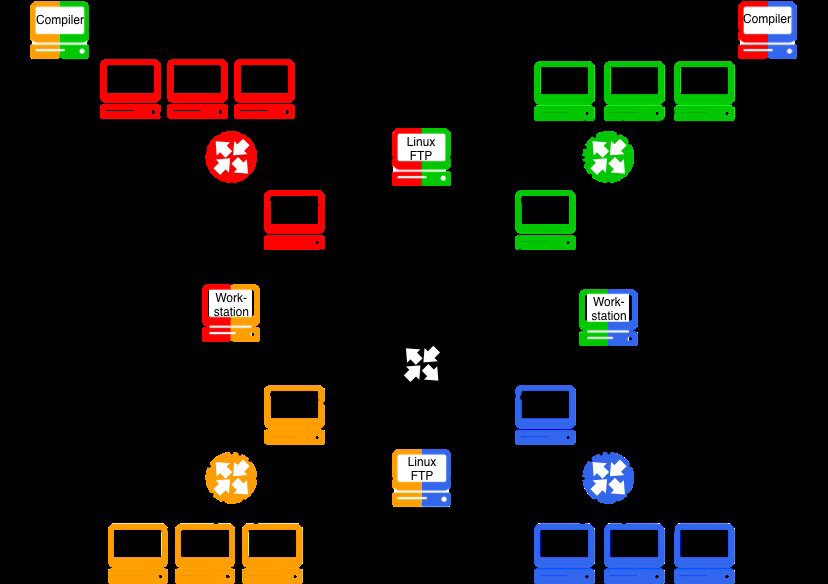

Visualization of Cybersecurity Competitions

The goal of this project is to raise awareness and encourage learning cybersecurity principles by making competitions appealing to a wider audience. In an effort to make events compelling, attractive, and watchable, the researchers are developing systems to support visualizations and make the transactions between teams in different cybersecurity competitions easy to comprehend. In informing and educating the audience on the intricacies of the competition through engaging visualizations, cybersecurity competitions will be opened up to a world beyond just participants. In doing so, we can potentially attract new talent into the field. Our team seeks to make prototype visualizations for key actions in various student cybersecurity competitions and assess spectator understanding of key principles of the competitions.

David I. Schwartz, Chao Peng, Chad E Weeden, Daryl Johnson, Bill Stackpole

VR Cary

The RIT Cary Collection is one of the country's premier libraries on graphic communication history and practices. The VR Cary Collection aims to create new ways for others to access and experience artifacts in ways not possible inside a traditional environment. To learn more about the original collection click on the link: RIT Cary Collection Website. Foundational work on the VR Cary Collection was made possible through a Breakthru Grant from the Rochester Regional Library Council.

Shaun Foster, Steven Galbraith

Learn more

Research

A multimodal system for data visualization

As technology continues to advance, volumes of imagery from intelligence, surveillance, satellites, and reconnaissance systems have become very large and are still growing. Analysts have the demand on novel approaches that can enhance their ability to perform data analytical tasks for extended periods of time. This project conducts research and development of a natural 3D user interface presented in an immersive virtual environment, which will improve an analyst’s ability to detect and comprehend relevant visual targets and patterns within a large visual media collection. The 3D user interface will be driven by multimodal input from a combination of voice commands, hand gestures, and eye gaze. The 3D user interface will allow users to manipulate or communicate with data in a similar way that they perform in everyday life.

Chao Peng

Learn more

Color and Material Appearance in AR

Augmented reality (AR) utilizes see-through displays to mix virtual objects into real-world scenes. The optical blending of virtual and real stimuli is apparently interpreted visually with an understanding of transparency. AR users can attend to the AR foreground, discounting to some extent the bleed-through of the background elements. Alternatively, they can attend to real-world objects manipulated by AR overlays, partially discounting their influence. Aiming to build a color appearance model for optical see-through AR systems, this project involves psychophysical experiments, optical measurements, and models of the human visual system and its responses. This work is supported by an NSF CAREER award (1942755) for the period 2020 - 2025.

Michael Murdoch

Learn more

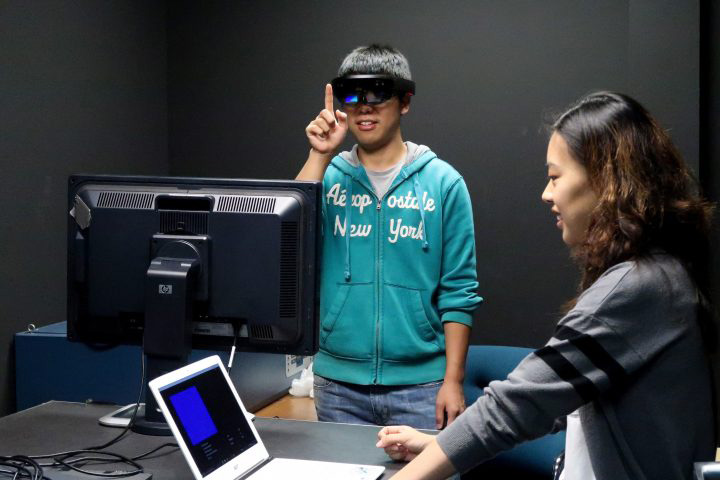

Development and Assessment of Virtual Reality Paradigms for Gaze Contingent Visual Rehabilitation

Stroke-induced occipital damage is an increasingly prevalent, debilitating cause of partial blindness, which afflicts about 1% of the population over age 50. Until recently, this condition was considered permanent. Over the last 10 years, the Huxlin lab at the University of Rochester has pioneered a training method to recover a range of visual abilities in previously blind fields. Unfortunately, effectiveness is severely reduced away from the laboratory, where the lack of an eye tracker prevents targeted stimulus delivery to the edge of the blind field. The proposed work leverages recent advances in eye tracking ·and virtual reality technology for effective at-home visual rehabilitation. Hardware and software development is accompanied by psychophysical evaluation for the validation of new methods for at home rehabilitation paradigms in virtual reality.

Gabriel J. Diaz, Krystal Huxlin (University of Rochester), Ross Maddox (University of Rochester)

Digital preservation and reconstruction of aural heritage

Aural heritage preservation is a form of cultural heritage conservation that documents and recreates the auditory experiential details of culturally important places, enabling virtual interaction through physics-based reconstructions. In this novel and innovative project, we will advance aural heritage preservation and access via digital technologies, codified in a protocol with instructed tutorials that facilitate broad adoption of these new techniques. To make aural heritage viable for researchers across disciplines, we seek to provide conceptual guidelines and software tools that enable the inclusion of aural heritage in Humanities collections and preservation activities, among other potential applications.

Sungyoung Kim, Dr. Doyuen Ko (Belmont University), Dr. Miriam Kolar (Amherst College)

Learn more

Neural Networks for Robust Eye Tracking in Real World and Virtual Environments

Contemporary algorithms for video-based eye tracking involve placing near-infrared cameras close to the eye. Computer vision or trained neural networks for feature detection track the movement of features in the eye images, such as the pupil/iris boundary, or pupil center. These tracked features are then fed into a model for the estimation of gaze direction. We have pioneered, and continue to develop, algorithms for the detection of features in eye tracker imagery, and the most successful, RITnet2 recently won the OpenEDS challenge, organized by Facebook. To overcome the time-costly and laborious process of hand-labelling the training set of labelled eye imagery, the supervised networks are trained using synthetic imagery with pixel-level semantic ground-truth reconstructions of previously recorded gaze behavior. The rendered imagery approximates the camera positioning and properties used in contemporary eye trackers, and the human avatars used during rendering are representative of individual differences, including those spanning gender, age, skin tone, and age. These techniques promise a future in which video-based mobile eye trackers work reliably, despite environmental degradations to eye imagery, and despite individual differences in appearance.

Reynold Bailey, Gabriel J. Diaz, Jeff Pelz

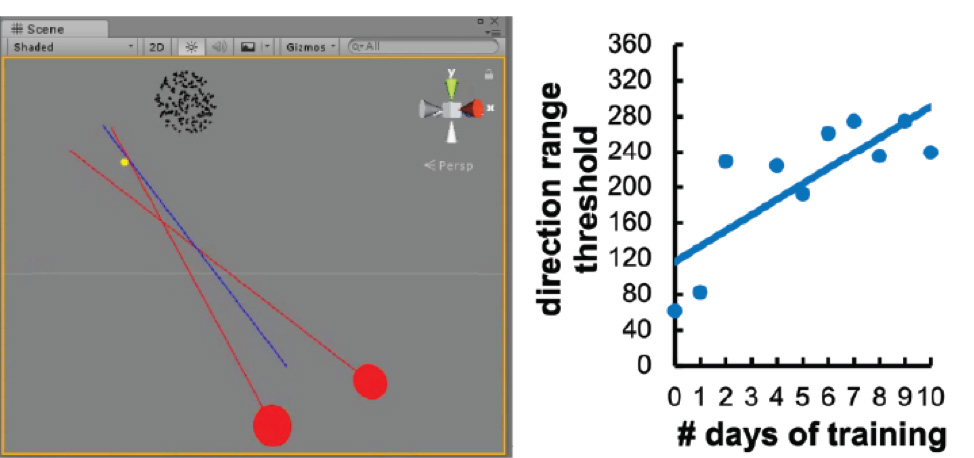

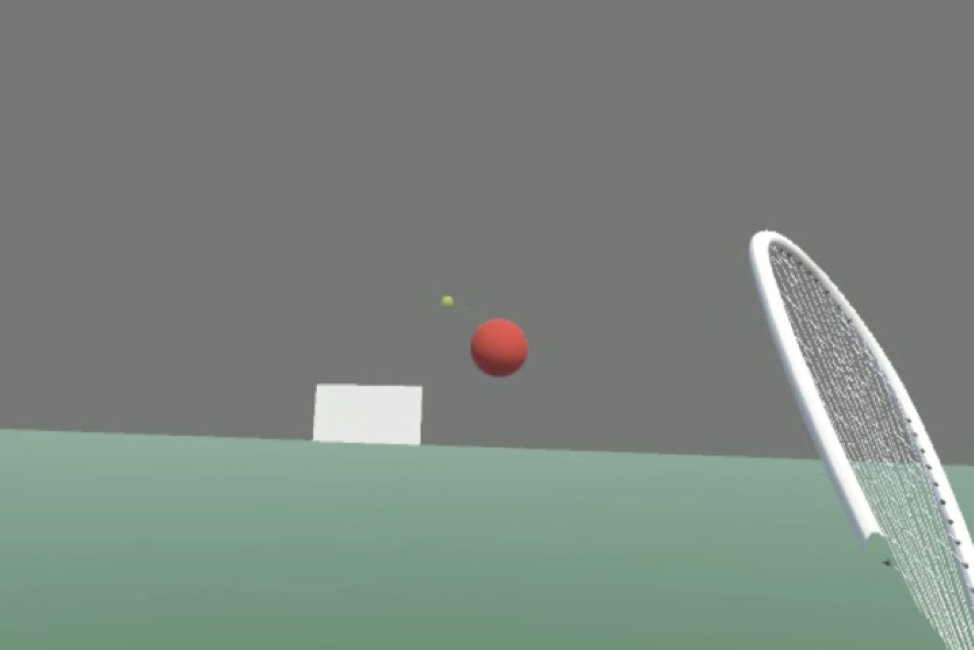

Visual and Motor Coordination of the Eyes and Hands by Prediction in a Virtual Reality Ball Catching Task

When preparing to intercept a ball-in-flight that is briefly occluded during its approach, humans make predictive saccades ahead of the ball’s current position and to a location along the ball’s future trajectory. Although this behavior is ubiquitous amongst novices, extremely accurate, and can reach at least 400ms into the future, the field of neuroscience currently lacks testable models of prediction, and empirical understanding of how predictive behavior is combined with strategies for online control under less uncertain conditions. To investigate the role of prediction in coordinating movements of the eyes and hands, we immerse subjects in a virtual-reality ball interception tasks in which a ball’s trajectory can be computationally controlled, systematically manipulated, and recorded for subsequent analysis. In addition, behavior is monitored using a combination of motion capture and eye tracking equipment. The goal of this line of work is to produce empirically informed, testable models of visual prediction, and its consequences on visually guided action.

Gabriel J. Diaz

Stories

Changeling: A Story

In this surreal VR mystery game, the player takes on the roll of Aurelia, a dream-walker whose gift is the ability to see through the eyes of anyone she touches. She has been asked to find out what is wrong with the baby. As she makes her way to the crib, she touches mother, father, son and daughter in turn. As she contacts each one, she sees their view of the world through the lens of their hope and fear. Each view a different problem; a different style. The objective is to find the truth about the baby - unknown to the family, a changeling.

Elouise Oyzon, David Simkins

Other Girl

A single player VR interactive story

The other girl is coming - for you. Luckily, you can fly through time and space. Your only chance is to escape into the past. Explore the history and landscape of the New Jersey Pine Barrens as you try to figure out who she is. This is a first-person story exploration game that questions the impact of media and imagination on shaping how we see ourselves and the world around us.

Experiment in structuring game narrative through enacted and emergent modes of spatial storytelling. The goal is to try to avoid cut-scenes, extensive dialog and text for conveying story. Instead, relying on bits of audio that are randomly associated with objects, using maps as “cuts” and interactivity. The scenes presented in this demo are the introduction, instead of a cutscene – basically, the first chapter. The themes of the game explore how media (old as well as newer forms) impacts the nature of self and our relationship with the world. Additionally, realistically rendered materials are undermined by surreal and dream like events (scene construction, particle emitters, etc) to help support and question the nature of reality.

Elizabeth Goins, Tom Davis

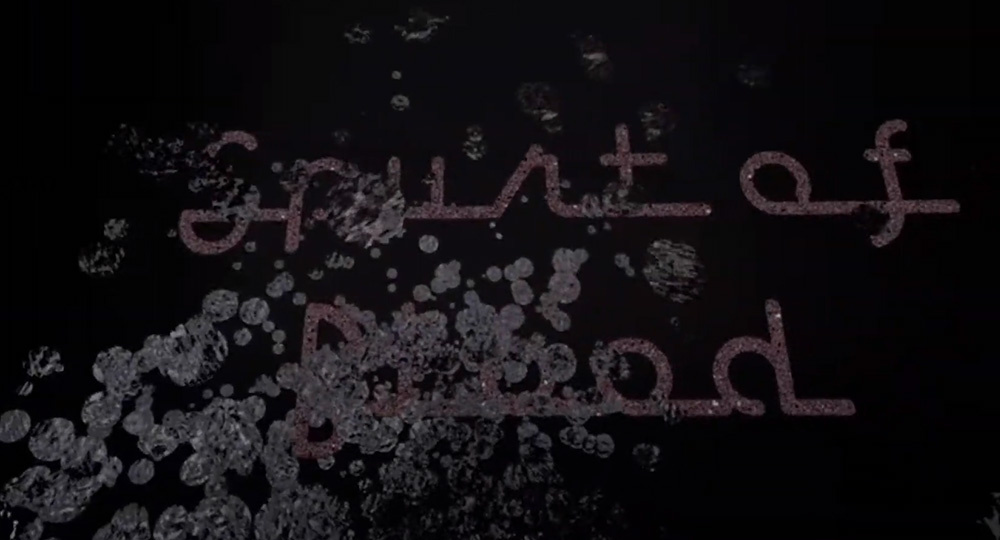

Spurt of Blood

A VR production of the 1925 surrealist play, Jet de Sang. Written by Antonin Artaud, the short play was, for many years, considered unstageable due to the bizarre stage directions. The play presents disconnected images of creation and destruction, gluttony and lust, sex and violence in a manner meant to hammer at the conscious mind in order to reach the subconscious. The VR production interprets the script to explore the intersection of performance and single player experiences. through interaction, particle effects and sound, the player is immersed in the themes of the play.

Elizabeth Goins, Andy Head

Watch video

Swing

Swing is a narrative, virtual-reality film combining 2D, 3D and 2.5D animation techniques. The story unfolds in overlapping acts that depict the internal and external struggles of a frustrated girl who is attempting and failing to swing on a playground swing. This immersive experience incorporates crowd-sourced stories from over forty contributors and was animated in Maya (3D), TVPaint (2D), Oculus Quill (2.5D) and combined in Unreal Engine. It was funded in part through an RIT FEAD grant and an Advance Connect grant, with support from MAGIC Spell Studios. Swing is an Official Selection of festivals worldwide including Animaze: Montreal International Animation Festival, Bucheon International Fantastic Film Festival (BIFAN) in South Korea and Demetera International Film Festival in Paris where it won Best VR Short.

Mari Jaye Blanchard, Mark Reisch

Learn more