Fram Chair Award

Fram Chair Award

- RIT/

- Applied Critical Thinking/

- Fram Chair Award

Fram Chair Award for Excellence in Applied Critical Thinking at Imagine

The Fram Chair seeks to recognize excellence in the application of critical thinking that was used in the preparation and creation of an exhibit at Imagine RIT. This recognition is not for the exhibit itself, but the thought processes used to arrive at or create the exhibit.

Application of critical thinking connects the performance chain of knowing-doing-creating. At RIT, applied critical thinking is engaged thinking used to effectively comprehend and analyze a context, develop a point of view, and implement a strategy to solve a complex problem or creatively manifest new ideas, both individually and collectively.

Eligibility

- Any team presenting or exhibiting at Imagine RIT

- At least one of the team members must be an actively enrolled RIT student

Note: The names of all RIT contributors (RIT students, faculty, staff) must be listed on the application, and the group type (small group, large group) must be specified.

Application Process

All deadlines must be met in order for the application to be considered, and the final exhibit must be presented at Imagine RIT.

Applications for the 2026 Fram Award are now open and will close on April 1, 2026. Please click HERE to apply.

Award

Winners will be announced prior to Imagine RIT and award certificates will be presented at Imagine RIT in the spring on April 25, 2026.

Awarded groups will share $250 Tiger Bucks.

This year the committee seeks to recognize excellence in the following categories:

- Small group exhibit (1-5 participants)

- Large group exhibit (6 or more participants)

Award Recipients

- Large Group: The RIT Iceberg: Exploring RIT Student Culture: Igor Polotai, Professor Jim Rankin, Elyana Medcalf, Michael Norton, Ava Curby, Daisy Roberson, Dannahe Kuntz, Dayne Stein, Evi Schwartz, William Walker, Alexa Amoriello, Andrew Steven Hernandez, Jay Miller, Connor Vallandigham, Emily Ott, Maya Smith, August Michaels, Samantha Beale, Hudson Ward, Christine Espeleta, Emmett Beggs.

The RIT Iceberg Exhibit seeks to exhibit, educate, explore, and engage visitors with the RIT’s vast student culture. It’s built off the prior work of Igor Polotai, who cataloged over one hundred entries of RIT folklore, obscure history, and fun facts. This exhibit is an expansion of the original Iceberg, showcasing a curated selection of the origins of jokes, sayings, legends, pranks, stories, folklore, and material culture that shape the RIT student experience. The exhibit consists of a museum display of student-created objects, an interactive activity to add yourself to the folklore tapestry and showcasing the new Iceberg website.

- Small Group Winner: Leveraging 3D Ceramic Printing for Personalized Bone Implants: Leanna Frasch, Jillian Silva, Jade Myers, and Denis Cormier.

This exhibit showcases the potential of novel ceramic 3D printing technologies in designing personalized implants. The process workflow begins with computer-aided design (CAD) and simulation, demonstrating how patient-specific data from scans can be translated into personalized designs using tools like 3D Slicer. Advanced software, including Fusion360 and nTop, further enables the creation of biomimetic structures. The exhibit will feature ceramic 3D printed materials at various stages—demonstrating the entire process, from pre-processing to post-processing, and highlighting the machines used and final products designed for patient-specific bone-cartilage scaffolding and implant research.

- Large Group: BEEBO - Robotic Arm for Education: Sam Hebbar, Barry Richter, Emma Mahoney, Max Barron, Ben Thomas, Andrew Tevebaugh

The BEEBO provides an example of control systems that Biomedical Engineering students can watch, manage, and edit to better understand how Control Systems can be set up and applied in a Biomedical setting. A robotic arm is one of many examples of rehabilitative assistive devices.

- Small Group Winner: NanoPower Solar Cell & LEDs: Experiment & Explore!: Katelynn Fleming, Elijah Sacchitella, Mahan Sahafipourfard, Anthony Mazur

Ever wondered how solar cells or LEDs work? Turns out, they're closely related. At this exhibit, get your hands on real solar panels and hydrogen-generating tubes to discover how they make electricity and are impacted by shade and time of day.

2023 Small Group Award: G.L.O.V.E: Assistive Kitchen and Bathroom Gloves

Team: Max Likens, Shannon Martin Ignaffo, Ri Adukure, and Parker Herman

2023 Large Group Award: Alt-Andalus: An Exhibit of a Medieval World That Never Existed

Team: Trent Hergenrader, Annie Barber, Willow Collopy, Ryker D'Angelo, Jess Edwards, Jess, Juliana Falcon, Ace Gray, Justin Kennedy, Nic Lande, Miranda Lenaghan, Patrick Mitchell, Zoe Nast, Taode Ogden, Henry Orsagh, Emily O'Shea, Marlowe Pagerey, David Sterling, Quinn Sullivan, TK Sylvester, Aubrey Tarmu, Beau Wacker, Rainey Walker, and Maddy Whelan

The Fram Advisory Board also recognizes these teams as Honorable Mentions:

- Greater Fuel Efficiency Through Vaporization: Team: Carson Tosta

- Painted World: Neo-Versailles: Team: James Zilberman, Evan Riley, Megan Schier, Lucas Diamond, Chase Call, Alec Carter, and Holly Allen

- CGM for Cats and Dogs: Team: Joey Testa, Renee Banagan, Anya Fiolsi, Ryan Snyder, Lauren Zeglen, and Lily Mussallem

- Magnetic Radiation Shielding for Space Applications: Team: Max Wolbeck, Tevin Hendess, Benjamin Stuhr, Joshua Yoder, Samuel Cashook, Claire Kreisel, Coby Asselin, Connor Levine, Will Wright, and Braley Lanchner

- Marketplace Melee: Team: H Rose, Josh Clemens, Jay Sanford, Nic Lande, and Tyler Palmiter

- Information Assistance System for the Elderly: Team: Jude Chudi Okpala, Hu Shaohua, Liu Zhuodong, Zhang Bohan, and Shi Angye

- The Nine Dot Problem: Team: Alances Vargas

- Making Technology Accessible to the Elderly: Team: Jude Chudi Okpala, Jiang Hanzheng, Dong Hanfeng, Hao Jiafu, Wang Yanhe, and Li Fangrui

- Project G.A.R.D.E.N.S: Team: Hannah Bailin, Sebastian Moreano-Mesa, and Emily O'Shea

- We're Ready for Science! The Best of Friends: Biology and Art: Team: Elizabeth Perry, Tassia Garrison, Maya Sullum, Lauren Schack-Sehlmeyer, David Brassies, Hailey Shepherd, Karthikha Sri Indran, Maya Quaranta, Ella Lewis, Jack Herz, Sasha Markle, and Aalisah Wynn

2022 Small Group Award: Ruminations

Team: Maria Kane

Abstract: This project explores the combination of traditional art with modern technology. By overlaying augmented reality on woodblock prints, Marie is discussing the complex nature of mental illness. Each AR experience is based around a particular mental illness. The goal is to evoke a sense of empathy in the viewer by discussing mental illness in a visual way, beyond a diagnosis or definition, since mental health is more of an experience than something concrete.

2022 Large Group Award: GET HEALTHY GET RITch®: Interactive Platform, Simulation and Digital Tools

Team: Caleb Vaccaro, Marc Molnar, Kevan Beemsteboer, Tirzah Pilet, Deon Allen, Kristy Kelley, Qian Li, Mini Mathai, Marielsy Pimentel, Kalpana Sundaram, Noora Abdulkerim, Tim Baer, Jaime Elizabeth Blackmon, Thomas Chacko, Eljada Gjoka, Dustin Haraden, Kayci Hauser, Joanne Raptis, Constance Rose, Siena Tugendrajch, Leah Ward, Johnathan Wright, Dr. Cassandra Berbary, Dr. Caroline Easton, Dr. Cory Crane, Dr. Richard Doolittle, Dr. Tory Toole, Dr. Rupa Kalahasthi and Dr. Celeste Sangiorgio

Abstract: Research indicates that approximately 50% of individuals who experience a substance use disorder also experience a co-occurring mental health condition; however, more than half of these individuals do not receive treatment. The introduction of technology into the mental health field promises to combat many of the barriers to receiving treatment. Technology based treatment tools allow for a low cost, efficient, comprehensive, and sustainable platform that can be widely distributed. This interactive exhibit highlights the use of technology-based behavioral health tools including a behavioral health avatar coach, a 3D substance use prevention tool, and a digital emotion measurement tool.

The Fram Advisory Board also recognizes these teams as Honorable Mentions:

Jungle Jam

Team: Anna Leung, Will Salerno, Devin Kirkwood, Simon Morrier, Dylan Gomer, Hun Choi, Andy Huang and Andrew Beach

Abstract: Jungle Jam is the bridge between the physical and digital worlds of interaction. You and your friends are camping in the jungle. You’re about to go to bed when all of a sudden, hungry wild animals come out to attack you! In this fast-paced game players use a physical slingshot to launch food at a projection of hungry animals. Additionally, a real-life camping display invites people to take pictures as a souvenir. This project is at the intersection of all that New Media Design and New Media Interactive Development offers: design thinking, problem-solving, visual communication, motion graphics, and creative programming.

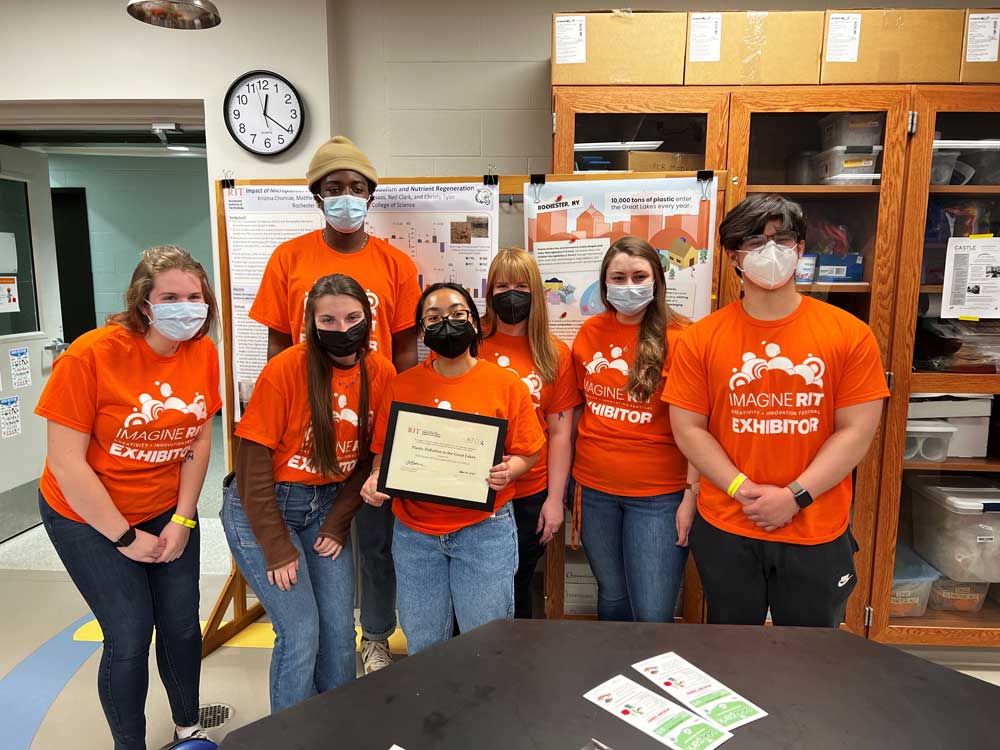

Plastic Pollution in the Great Lakes

Team: Vanessa Baker, Carmella Bangkong, Olivia Martin, Kristina Chomiak, Dr. Matthew Hoffman, Dr. Nathan Eddingsaas, Dr. André Hudson, Kaeti Stoss

Abstract: This exhibit shows the different types of plastic pollution, the tools used to study it at RIT, and the visualize of how it moves through Lake Ontario, and what the research group is doing to remove debris in the Rochester area. Visitors can get information on local cleanup efforts and the group’s installation of new trash capture devices around Rochester. This project is part of an interdisciplinary research team spanning the sciences and engineering.

2019 Small Group

2019 Small Group Award: Food Waste Survey

Team: Jessica Peterson

Abstract: One of the greatest challenges of managing food waste is participation; we must have participation from people in order to keep food waste out of the landfill and divert it so it is utilized as a resource, not a waste. Households account for about 48% of food waste in the US. This means participation at the household level is key to managing food waste as a resource. The survey seeks to understand household perceptions about food waste management to identify the barriers so we can generate solutions or new services to overcome these challenges and get more household participating!

2019 Large Group

2019 Large Group Award: Forensics Tech and Tactics

Team: Tia Hose, Balen Wolf, Gabriel Corales, Jesse Pickard, Holly Elder, Jade Mullen, April Dumlao, Lin Hui Jiang, Luke Nearhood, Mariam Dzamukashvili, Mary Yuen, Sakinah Abdul-Khaliq, Tommy Tran

Abstract: Our exhibit looks at the different forms of collecting and analyzing forensic evidence. We compare and contrasts various methods and try to determine how reliable each one is. We have interactive games to test the crowd on their crime solving abilities.

2018 Small Group

2018 Small Group Award: Commercializing Pumice Roofing Tiles in Nicaragua

Team: Thomas J. Higgins III, Karina Alexandra Penaloza, Camila Mota Oliveira

Abstract: This exhibit features the capstone project of three MS Management students trying to help an engineering team from KGCOE commercialize their pumice roofing tile design in Nicaragua. The students are trying to formulate accurate statistical projections to support recommendations for a go-to-market strategy for a new business in El Sauce, Nicaragua. The students are using statistical agency reports on key economic factors (i.e. GDP per capita growth projections, etc.) coupled with local vendor quotations and estimates to try and extrapolate potential market penetration, growth and success factors based on chosen business operation strategies for this new product.

2018 Large Group

2018 Large Group Award: Composting & Recycling: Imagine Sustainability & Food

Team: Stefano Alfredo Agostino, Carmella Mapong Anak Bangkong, Sarah Brooke Bentzley, Matthew Bollinger, Scott Alexander Carlton, Ashley Casimir, Mercy Chado, Alyssa Christner, Ibrahim Cisse, Joshua Thomas Dunn, Tyler Joseph Gamble, Austin Joseph Giacomelli, Aleksandrs Huck, Eric Kelleher, Ethan J. Koval, Katherine Rose Larson, Joelle Christina Marston, Grayson Charles Morin, Alex Nieschlag, Jenny Rose Patterson, Morgan K. Rennie, Elizabeth Ciaccia Rintels, Hannah Elizabeth Schewtschenko, Corbin Frank Shamburger, Yang Shen, Jorge Daniel Soto, Amelia Millicent Sykes, Michelle Wong, Andrew Bernard Yoder, Violet Evening Young, Elizabeth Alice Moore

Abstract: With increasing global challenges such as climate change and inequality, integrated solutions are needed. The United Nations Sustainable Development Goals (SDGs) including quality education, clean water, and no poverty challenge everyone to achieve the goals by 2030. Our Sustainable Development class has researched the sustainability pillars and identified a local sustainable development challenge in the context of the SDGs. Our exhibit highlights multiple solutions to educate the local community on the role they can play in helping to achieve the SDGs through proper composting and recycling practices to reduce waste, lower greenhouse gas emissions, and increase responsible consumption.

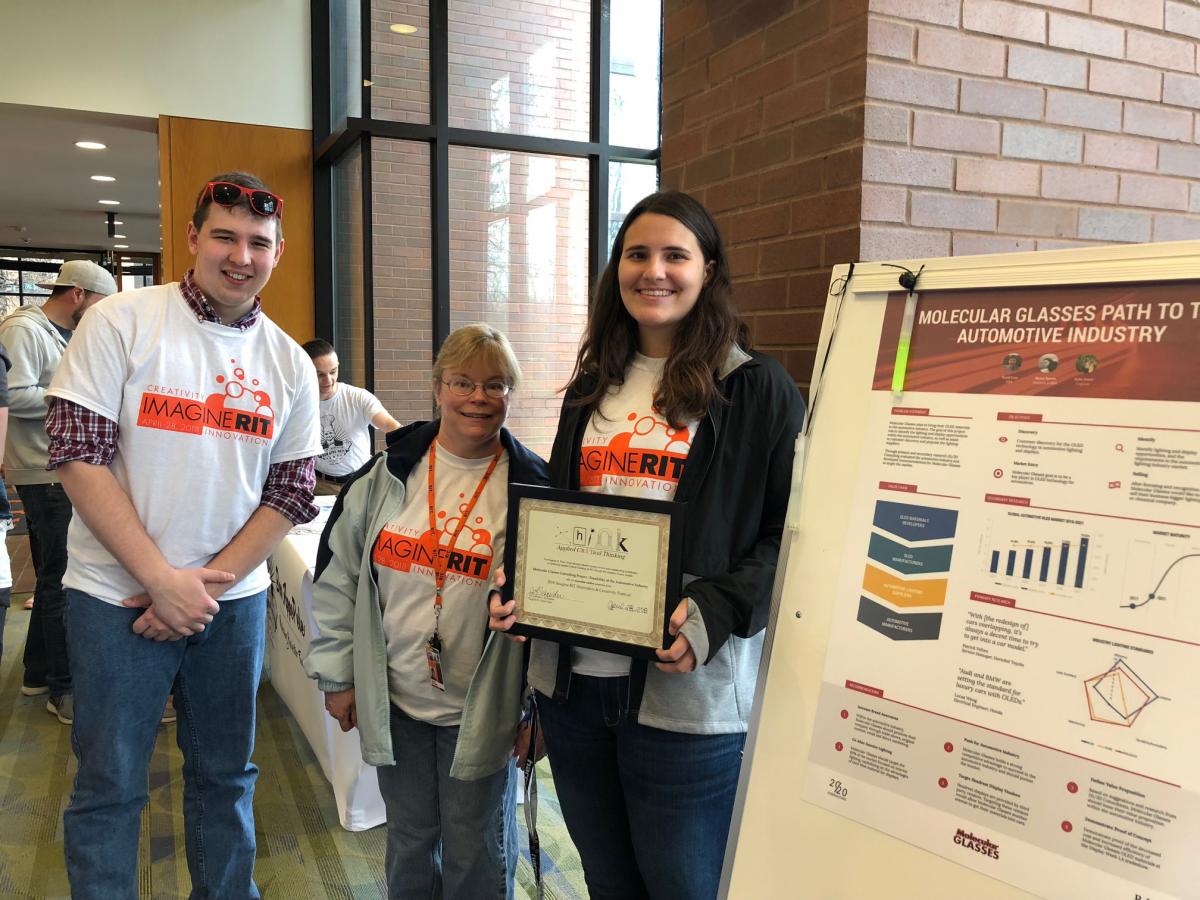

2018 Honorable Mention

2018 Honorable Metion: Molecular Glasses Consulting Project - Feasibility of the Automotive Industry

Team: David Even, Katie Green, Rebecca Searns

Abstract: Our project shows our team’s findings and recommendations on whether or not Molecular Glasses, an OLED materials company, should enter the automotive industry. We took this broad question and did extensive primary and secondary research to answer it. We then applied further critical thinking to take the wide breadth of knowledge we discovered and turn it into actionable data. Some examples of business concepts we applied and developed were a condensed value chain, which included a detailed channel analysis, a competitor analysis including a SWOT and Porter’s Five Forces Diagram as well as a market analysis with extensive research.

2017 Small Group

2017 Small Group Award: Ambio & Critical Thinking

Team: Julianne Burke, Josh Ladisic, Conner Hasbrouck, Jess Wiltey, Bennoni Thomas, Colton Woytas, Sudarshan Ashok, Vincent Lin

Abstract: A team of 5 designers and 3 developers on the path to creating a digital platform that breaks distance to help people to reconnect with their separated loved ones in a naturally intimate way, leveraging latest wearable technology. In their research of current technological innovations that would serve our goals, the team discovered a technology that determines a user's mood through biological data such as blood pressure, heart rate, breathing pattern and vocal tone. Brainstorming how this technology could be sculpted into a delightful product experience to solve our problem in mind, we conducted ideation sessions to come up with various concepts through multiple rounds of sketching and iteration. Conducting business research to understand which platforms would solve our user needs the best, we noticed trends that showed smartwatch products such as Apple watch or Android wear with increasingly growing market sizes and technological offerings that make implementation of our solution easy. Leveraging the API (Application Program Interface) created by the development team, we worked towards coming up with various iterations of different use cases thus furnishing a quick prototype through which rounds of feedback and additional rounds of iteration were followed. Through further analysis of the wearables market and mentorship advice from professors and alumni mentors from the Silicon Valley, we understood the unique opportunity space to create our own wearable product that lets users share their mood.

2017 Large Group

2017 Large Group Award: Using Innovations in Technology to Combat Violence (Simulation & Behavioral Health: Meet Avatars/SimMan)

Faculty, Staff & Community Industry Mentors: Dr. Caroline J. Easton (CHST, Professor/Researcher and PI); Dr. Richard L Doolittle (CHST, Professor/Faculty Researcher); Meghan Lewis, Alli O’Malley, Nicole Trabold, Cassandra Berbary, Lindsay Chatmon, Brittany St. Jean, Joshua Aldred, Akshay Kumar Arun, Anthony Perez, Karie Carita, Jason Chung, Keli DiRisio

Abstract: Violence is escalating and continues to contribute to the burden of disease at the worldwide level. The negative consequences are devastating to families and society as a whole. The health consequences are numerous and include trauma, substance misuse, depression, anxiety disorders, medical problems and loss of work. The estimated cost to treat victims of violence and offenders is $5.8 billion dollars each year. Compounding this problem is the absence of effective treatments for violence, especially among those who offend violence. Clinicians and policy makers look for answers to help intervene to treat the after effects of violence. We believe that advances in science (e.g., targeted and evidenced based behavioral therapy strategies) can be delivered by interactive technological platforms, which can standardize the way behavioral health problems are screened and treated. Violence is contagious and often multi-generational, which, in turn, requires all necessary means to intervene in ways that are easily disseminated allowing access to evidenced based screening and treatment strategies.

2016 Small Group

2016 Small Group Award: Robotic Eye Motion Simulator

Team: Amy Zeller, Joshua Long, Nathan Twichel, Peter Cho, Jordan Blandford

Abstract: The objective of this project is to develop a robotic eye that mimics human eye movement to provide a standard for eye tracker testing and to do this within a budget of $2,000. Our senior design team has utilized critical thinking since day one in senior design. It has allowed us to evaluate ideas and to make informed decisions about our project. One of the biggest challenges that our team had to overcome was coming up with a motor to use for our design that both our team and customer agreed upon. An eye tracker is a device that tracks human eye movement and estimates gaze position. Eye trackers have long been used in psychology research, visual system research, marketing, and, recently, as an input device for human-computer interaction. The quality of the data eye trackers output is a fundamental aspect for any research based on eye tracking. There is currently no standardized test method for evaluating the quality of data collected from eye trackers. The lack of standard may lead to research being based on unreliable data. Different manufacturers measure quality using their own methods and researchers either measure it again using different methods or simply report whatever numbers the manufacturer provides. However, the goal of this project is to make the robotic eye affordable, which is necessary to make the use of this eye practical for eye tracker manufacturers and eye tracking researchers to use as a standard. Therefore, our team set out to find a motor that was less than $2,000, had a velocity of 8.73 rad/s and a repeatability of 0.015 degrees.

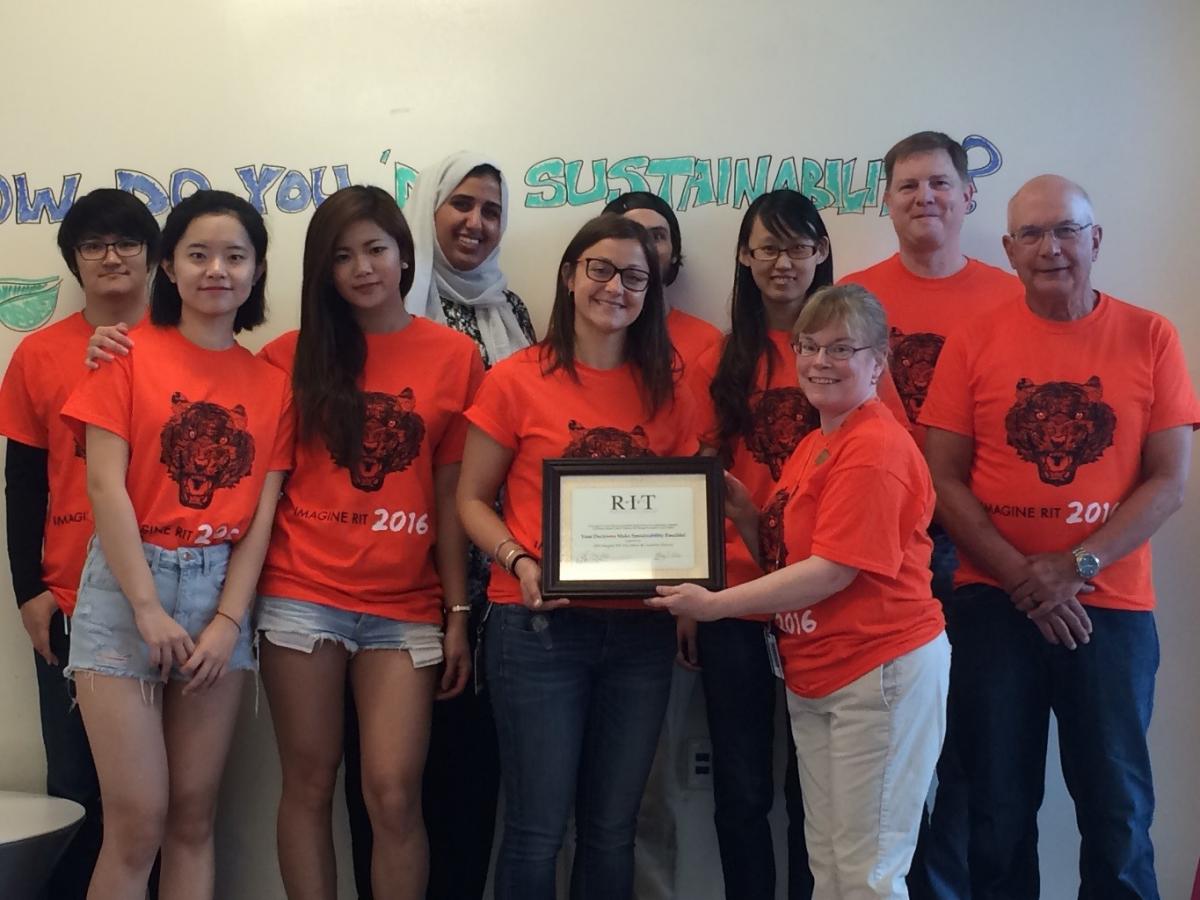

2016 Large Group

2016 Large Group Award: Your Decisions Make Sustainability Possible!

Team: Jennifer Russell (Coordinator, Golisano Institute for Sustainability) Reema Aldossari, Yi Feng, Shih-Hsuan Huang, Michael Kelly, Nicolas Matthew Miclette, Wilson Sparberg Patton, Wenjing Qi, Kaining Qiu, Elizabeth Stegner ,Jiahe Tian, Akanksha Vishwakarma, Hui-Yu Yang, Yue Zhang, Runhao Zhao (Industrial Design Graduate Students)

Abstract: Our society is facing some significant environmental and social challenges; some of these must be tackled through government and industry initiative, but for many of those challenges the most effective solutions can start right at home with the individual. Our increasing consumption of goods and services is putting significant pressure on our natural systems, as well as on our communities as they deal with increasing waste and resource constraints. We believe that, although consumers may feel helpless to fix some of these problems, in fact they have the potential to be among the most powerful drivers of needed change.

We use this Imagine RIT exhibit to explore how consumers make choices about the products they consume, and how their behaviors and evaluations are affected by new information. Specifically, we seek to understand how the consumer evaluates a ‘greener’ product relative to a ‘normal’ product, and how the product characteristics of ‘cool’ and ‘innovative’ interplay in their choices.