Fixing the forgetting problem in artificial neural networks

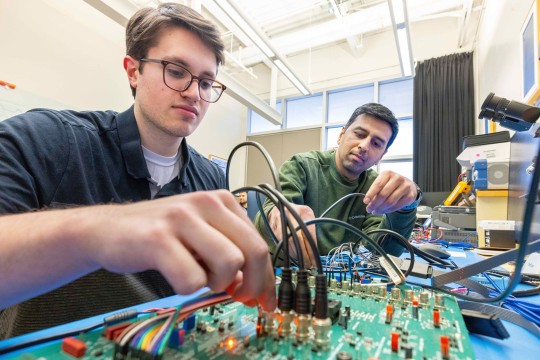

A. Sue Weisler

Christopher Kanan is creating brain-inspired algorithms capable of learning immediately without excess forgetting.

An RIT scientist has been tapped by the National Science Foundation to solve a fundamental problem that plagues artificial neural networks.

Christopher Kanan, an assistant professor in the Chester F. Carlson Center for Imaging Science, received $500,000 in funding to create multi-modal brain-inspired algorithms capable of learning immediately without excess forgetting.

Today, artificial neural networks—computing systems inspired by the biological neural networks that make up human brains—are used everywhere. They power everything from autonomous cars to facial recognition systems.

But because these systems differ in important ways from human brains, they have significant limitations.

“Everyone thinks that artificial intelligence has fully arrived and this stuff is going to replace and displace us, but there are some fundamental capabilities that the systems lack,” Kanan said. “Let’s say I want to teach a system something new—how do I do that? It’s not straightforward.”

Current systems are typically built using training sets to master tasks such as identifying patterns or objects, and then deployed to perform that task in perpetuity. To teach the system a new task, it requires rebuilding the system from the ground up because the system forgets large amounts of its previous knowledge, rendering it unable to perform the original task it mastered.

The concept is called catastrophic forgetting. “When animals or humans learn, that’s not a way that our memories are structured. We don’t catastrophically forget when we learn new things.”

Kanan is basing his new algorithms on the complementary learning systems theory for how the human brain learns quickly. The theory suggests the brain uses its hippocampus to immediately learn new information and then this information is transferred to the neocortex during sleep.

“We want to make these systems learn over time, learn very quickly, and learn from individual experiences rather than batches of data,” he said. “They should be able to use old and new knowledge to correctly answer questions about the world.”

Kanan’s new approach to neural networks would have multiple components— one learning quickly and the other one learning slowly but generalizing. The compressed general information is moved to long-term storage and is able to be retrieved when necessary, reducing the catastrophic forgetting phenomenon.

Using both text and visual cues, the systems would be able to classify large image databases containing thousands of categories. They could then operate autonomously with limited human supervision.

That autonomy is a huge improvement over existing systems, which rely heavily on annotated data and human supervision, limiting their utility.

Kanan also envisions his improved algorithms being extremely lightweight, meaning they don’t require advanced computing resources such as supercomputers and could run on something as simple as a smartphone.

This means that people could deploy continually improving learning systems to help at home rather than relying on Internet-based cloud services that store private information on external servers.

Kanan and his team of imaging science students began working on the project in October and the NSF grant is slated to continue through September 2022.