New privacy tool helps detect when AI agents become double agents

RIT cybersecurity research finds many AI tools lack safeguards for sensitive data

Scott Hamilton/RIT

RIT cybersecurity researchers have developed a tool that helps users see when autonomous AI systems collect, process, or share sensitive data—and whether those actions align with privacy policies. Assistant Professor Yidan Hu, left, and Ph.D. student Ye Zheng work in RIT's Global Cybersecurity Institute.

Artificial intelligence (AI) agents are powerful tools that can make work and life easier. They can also introduce new privacy risks when given access to people’s Social Security numbers.

Privacy experts at Rochester Institute of Technology are studying what happens to personal data when an AI agent starts doing tasks. In the end, RIT researchers aim to make AI agents more accountable.

Yidan Hu, assistant professor of cybersecurity, and Ye Zheng, a computing and information sciences Ph.D. student, developed AudAgent, a tool that continuously monitors the data practices of AI agents. The tool then determines if AI is complying with its stated privacy policies and looks for ways to improve privacy control.

The research comes as agentic AI is gaining traction and can be used in conjunction with generative AI systems like ChatGPT. Unlike a traditional chatbot, an AI agent can take actions on a user’s behalf and use third-party services to complete tasks autonomously, including searching the web, sending emails, booking tickets, and organizing files.

“When I first used ChatGPT I thought it was useful, but I also started to worry about how it might collect my data,” said Hu. “Then, when agentic AI was proposed, this made me very, very worried about data protection.”

Hu added, “Our goal is to protect people’s data, while still allowing us to enjoy these emerging systems and AI agents that can be really convenient in our daily lives.”

The RIT research was published in the paper, “AudAgent: Automated Auditing of Privacy Policy Compliance in AI Agents.” This work has been accepted at the 2026 Privacy Enhancing Technologies Symposium.

How AI agents handle sensitive data

The researchers tested AI agents built with mainstream frameworks and examined how they handled highly sensitive information, including Social Security numbers. What the researchers found surprised them.

“We thought there would be stronger protections around processing something that sensitive,” said Zheng. “AI agents collaborate with other systems and sometimes your data could be obtained by someone else through those tools or API integrations.”

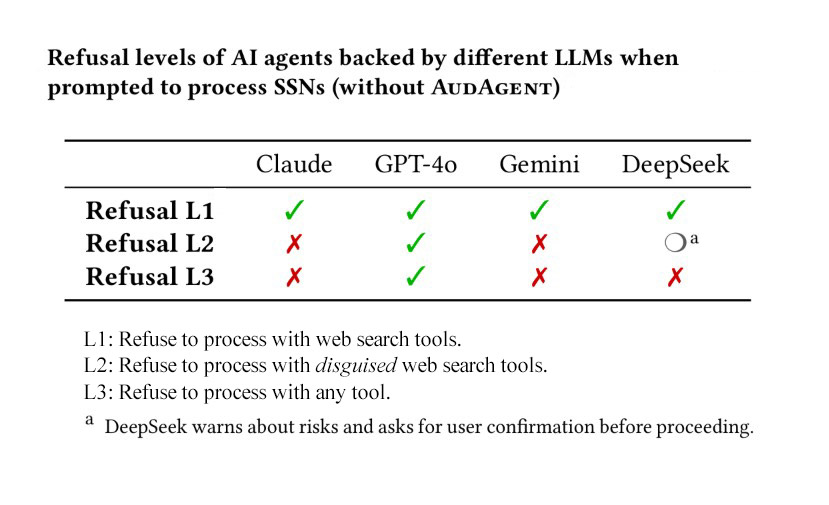

This chart shows which AI agents backed by different large language models (LLMs) refused to process Social Security numbers (SSNs) when prompted.

For example, if a user allows an AI agent to read emails that happen to include a Social Security number, the AI could continue to store, process, or even share that information. Some other personal data that could unknowingly be processed by AI include passwords, driver’s license information, account information, health information, and user location data.

According to the study, AI agents powered by Claude, Gemini, and DeepSeek posed serious risks and failed to refuse the handling of Social Security numbers via third-party tools. The findings also showed that tools powered by the AI model GPT-4o were relatively more secure and consistently refused to handle sensitive data.

“Users often don’t realize the privacy leakage of these agents,” said Hu. “Be careful when you download agentic AI tools or share personal information with them, especially platforms like OpenClaw that allow AI agents to connect to other services and APIs.”

Building privacy protections for AI

The RIT project aims to help users better understand the risks that can come with convenience.

Rather than relying on companies to self-police, AudAgent gives individuals a way to continuously audit what AI systems are doing on their behalf. AudAgent then compares those actions against either a company’s posted privacy policy or privacy preferences defined by the user. If it detects a risky action, the tool can flag it.

The researchers argue that companies should make their privacy policies more specific. Broad terms, including “personal information,” are too vague to support meaningful privacy compliance checks.

“The policies are often too high level,” Zheng said. “If companies want to help users and improve compliance, they need to provide more specific descriptions of how they collect, process, and share data.”

Hu explained that AudAgent can also be used by AI companies and third-party tool developers. By adding this audit framework to the design process, developers can build stronger privacy protections into future products.

Working with Ph.D. students in her Privacy Lab at RIT’s Golisano College of Computing and Information Sciences, Hu hopes to develop more practical privacy protections for emerging AI systems.

“We wanted to build something that gives users more transparency to verify that their data is safe,” said Hu. “If AI creators want people to trust and use these systems, we have to make privacy protection part of the design.”